RESEARCH

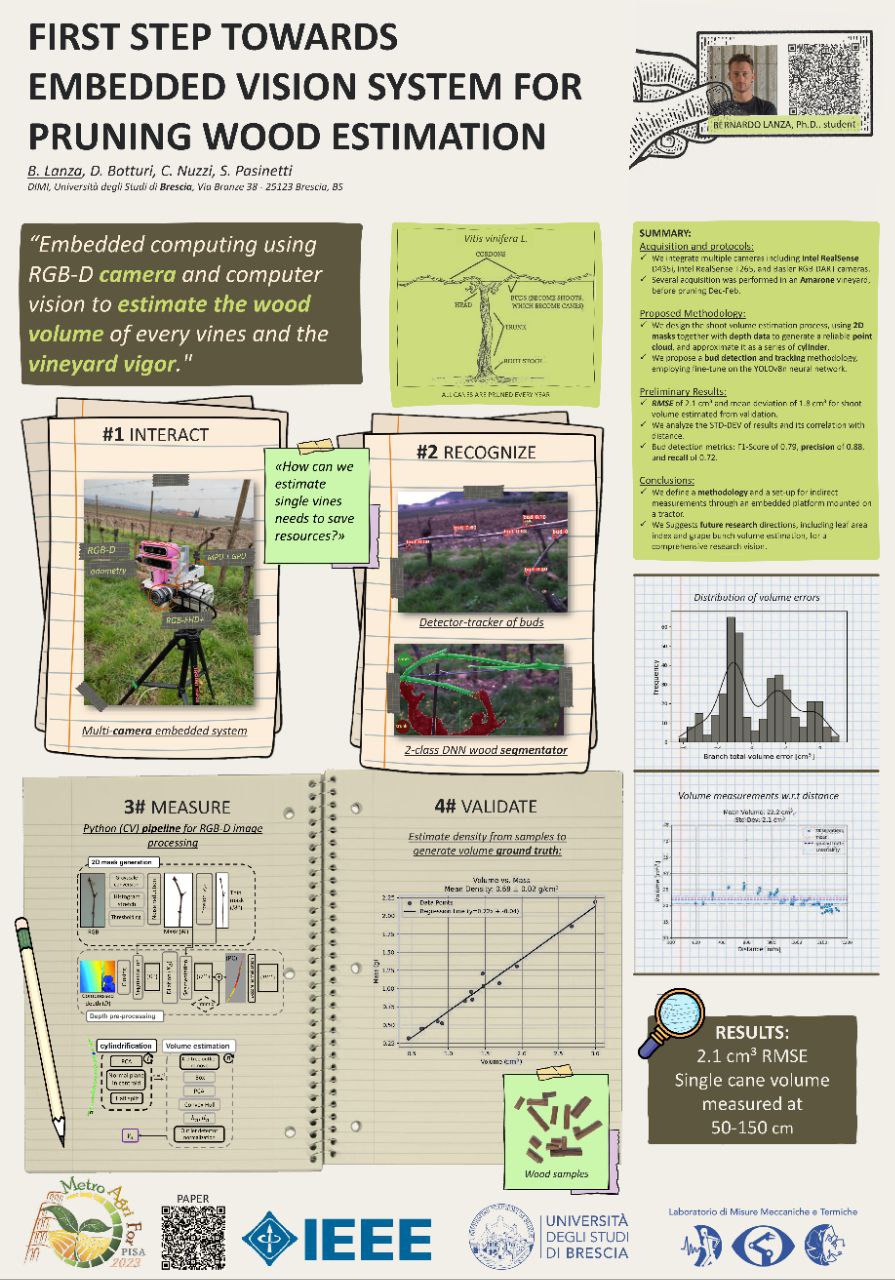

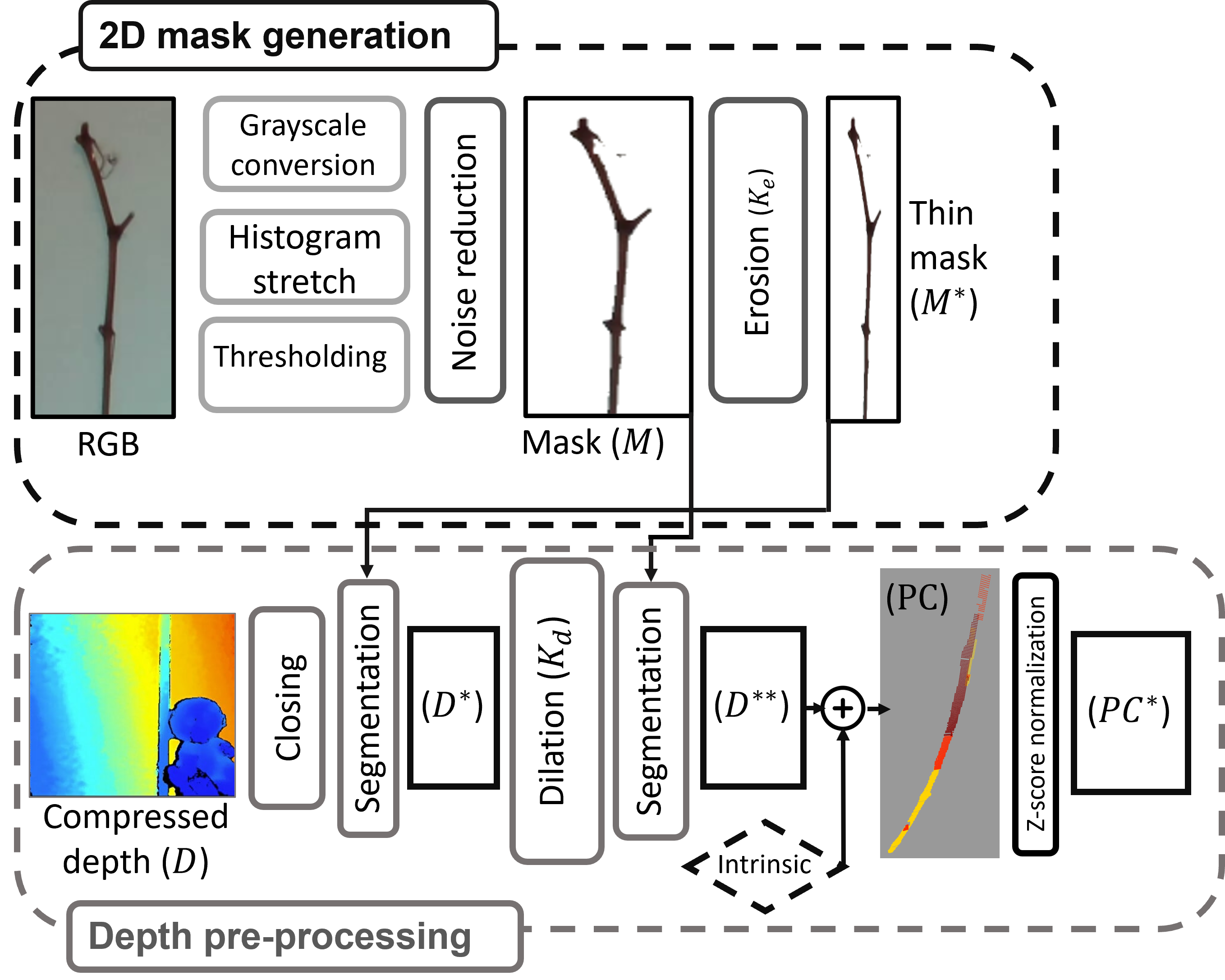

Optical Measurement of Vine Wood Volume and Spring Bud Development

Problem: Measuring vine woody volume and monitoring spring buds in real field conditions is difficult with manual inspections and inconsistent visual assessments.

Method: Developed an RGB-D and deep-learning pipeline for 3D vine volume estimation, plus automated bud detection, tracking, recognition, and counting during early seasonal growth.

Result: The wood-volume pipeline achieved 2.1 cm3 RMSE with 1.8 cm3 mean deviation, while the fine-tuned YOLOv8 bud detector reached an F1-score of 0.79 on a custom vineyard dataset.

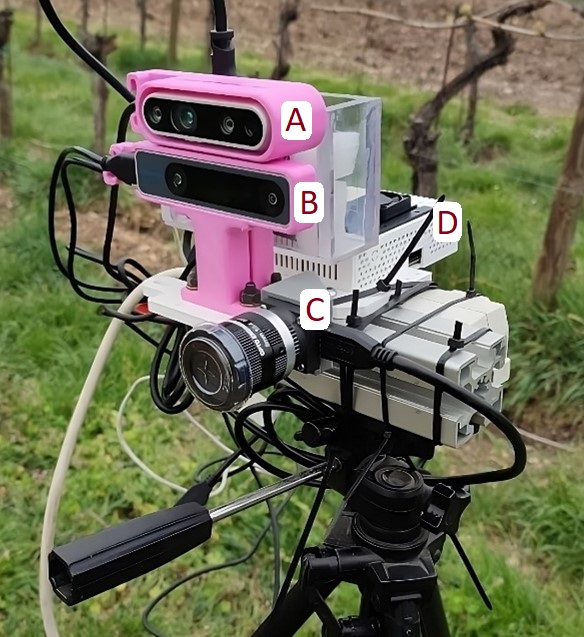

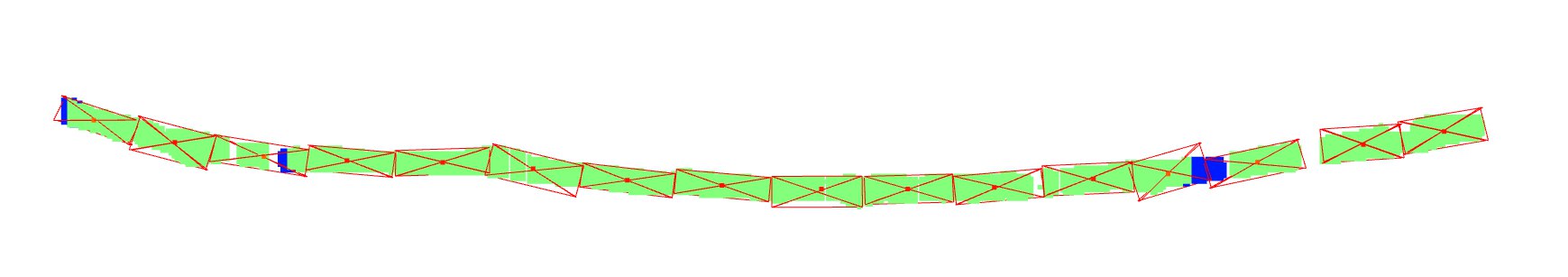

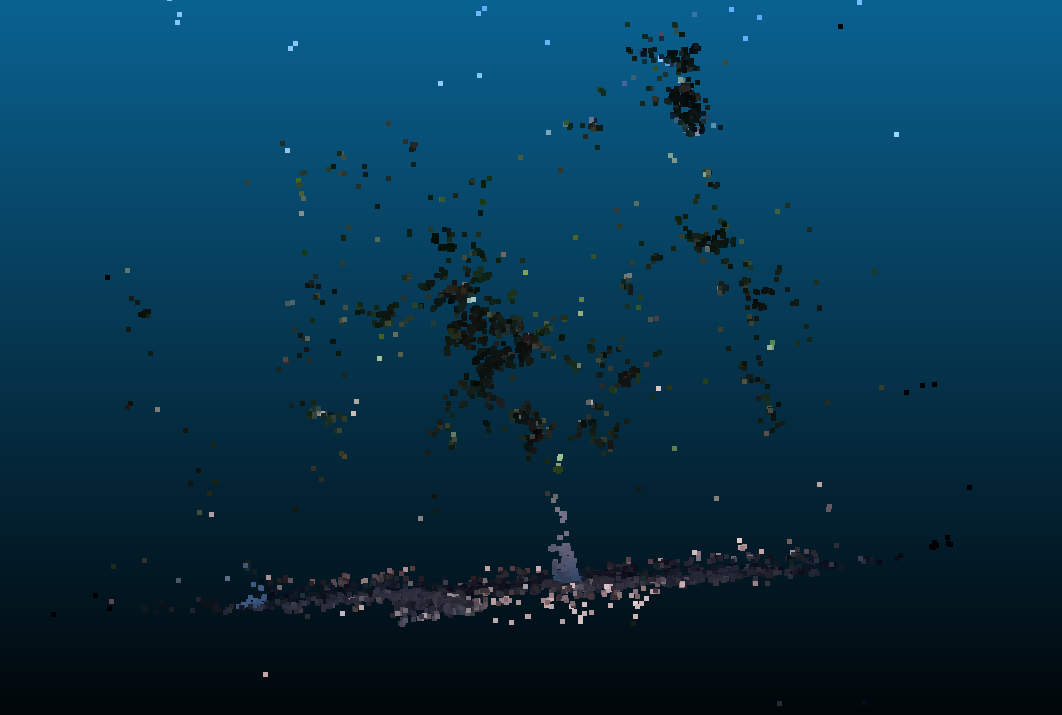

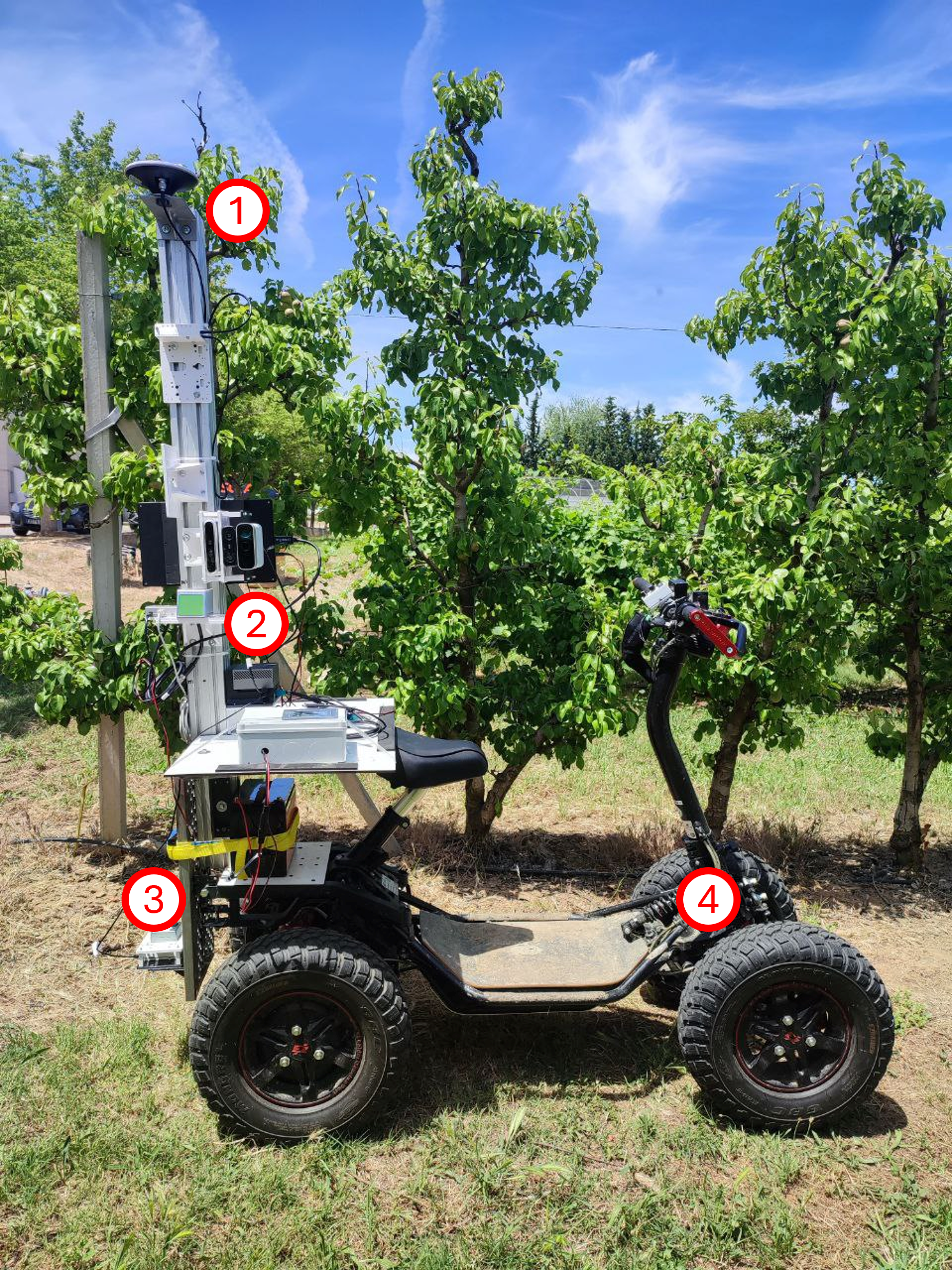

Mobile Multi-Sensor Embedded System for 3D Orchard Reconstruction

Problem: Accurate 3D orchard reconstruction is expensive and fragile in harsh field environments.

Method: Low-cost multi-sensor fusion (RGB-D, GNSS, IMU) with a custom SLAM approach tailored for agriculture.

Result: Validated 3D reconstructions and geometric measurements with edge-compatible acquisition workflows.

GitHub Repository: Hierarchy-Robust-SLAM

.png)

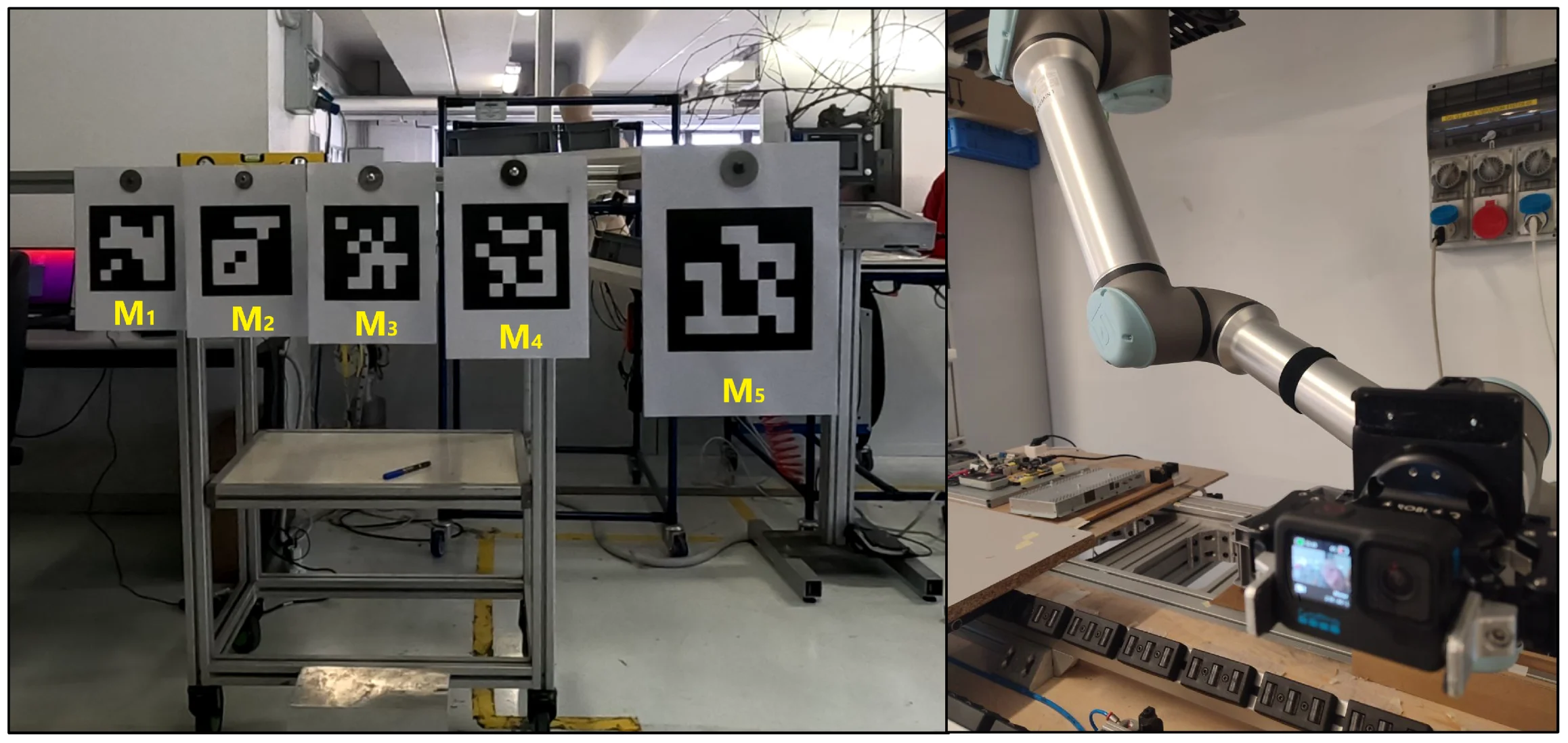

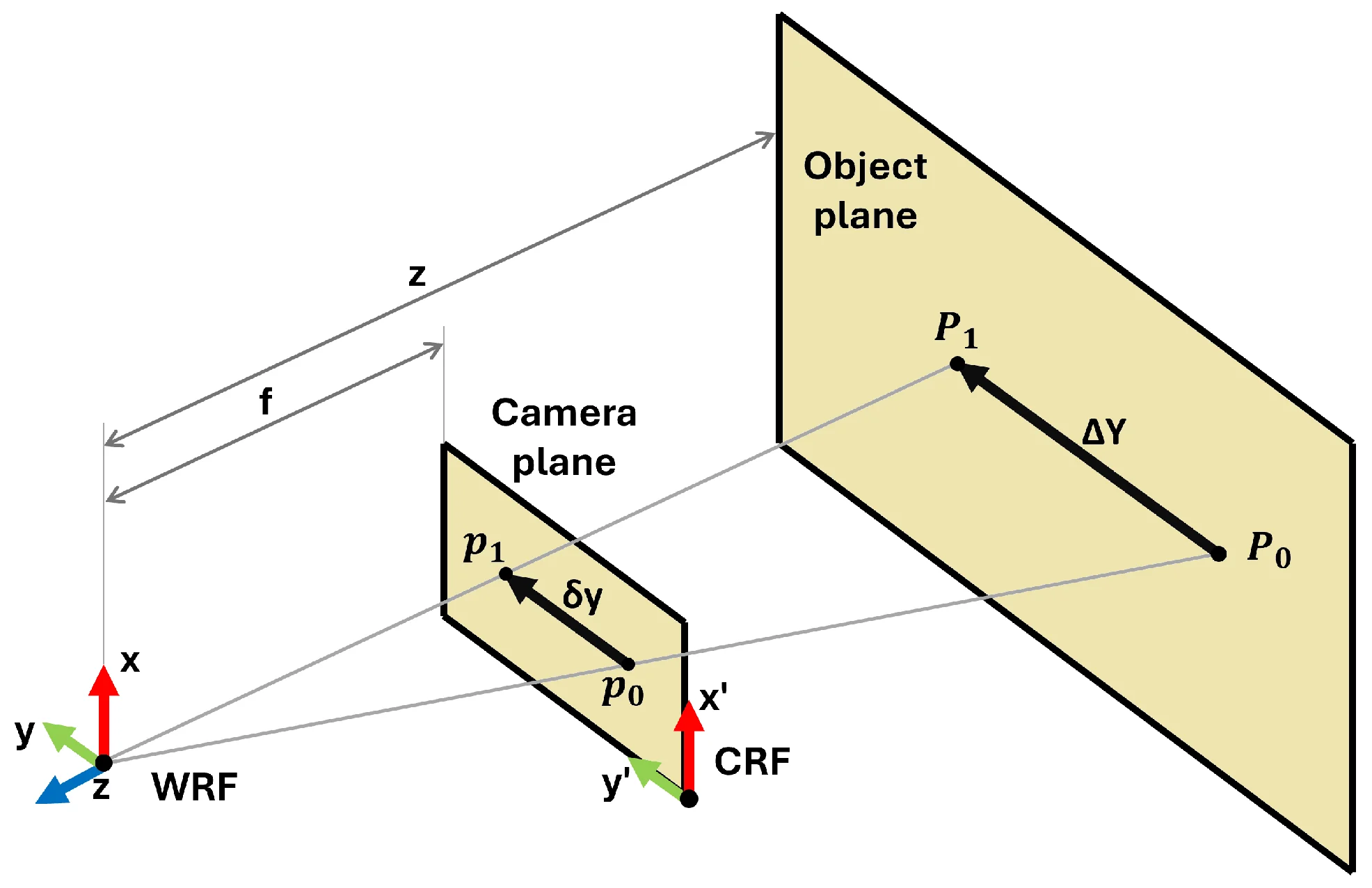

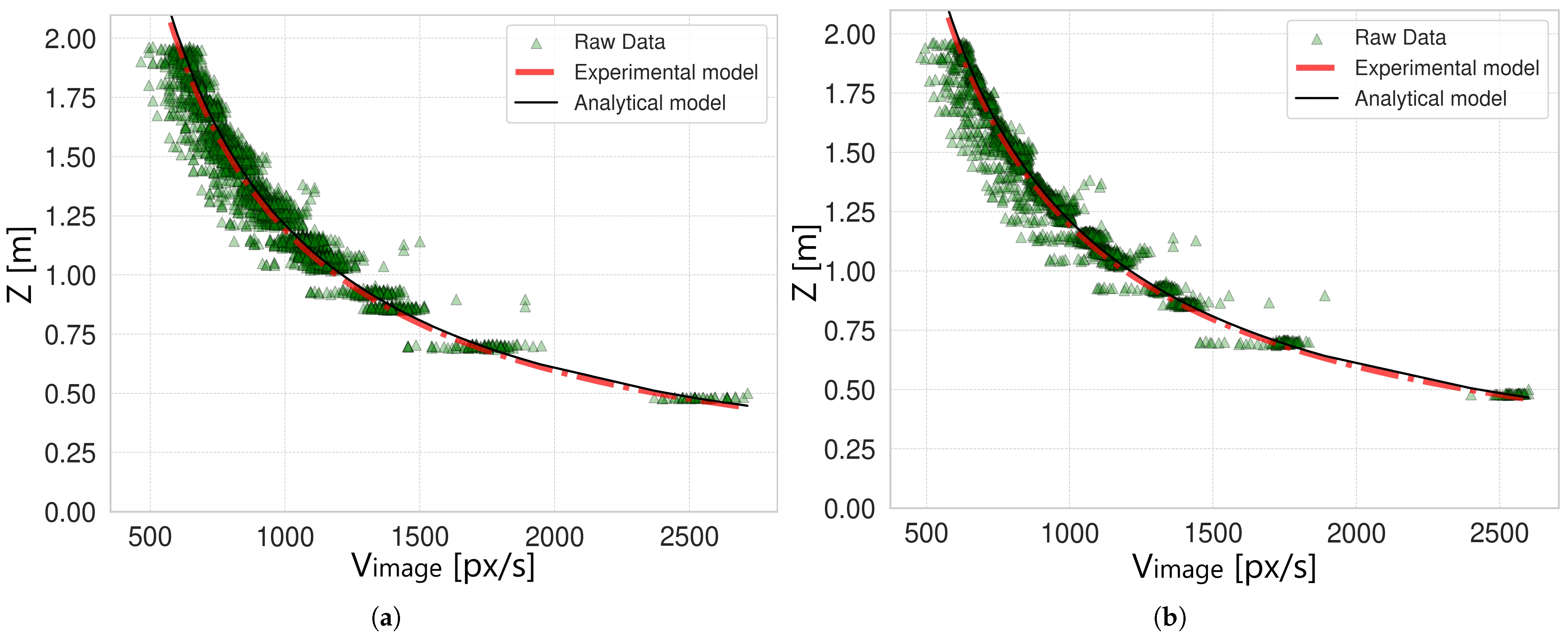

Monocular Depth Estimation from Optical Flow with Uncertainty Analysis

Problem: Low-cost depth estimation from a single moving camera is noisy and lacks formal measurement uncertainty — a gap for agricultural end-users who need reliable readings without expensive LiDAR or stereo hardware.

Method: Simplified optical-flow model relating image-plane pixel speed to real-world depth via a single calibration parameter K. Validated in laboratory with a UR10e robot at five speeds (0.25–0.97 m/s), four camera-target distances, and five ArUco markers at different depths. Monte Carlo uncertainty propagation with 10,000 synthetic realizations per data point. Window-based moving average filter (effective 20 fps from 60 fps) to reduce noise.

Result: Best-case depth uncertainty of 4 cm (filtered, 0.50 m/s) and 7 cm (filtered, 0.75 m/s). Image speeds above 500–800 px/s keep uncertainty below 20 cm. Two practical examples provided for end-users to select camera and vehicle speed. Code publicly released on GitHub.

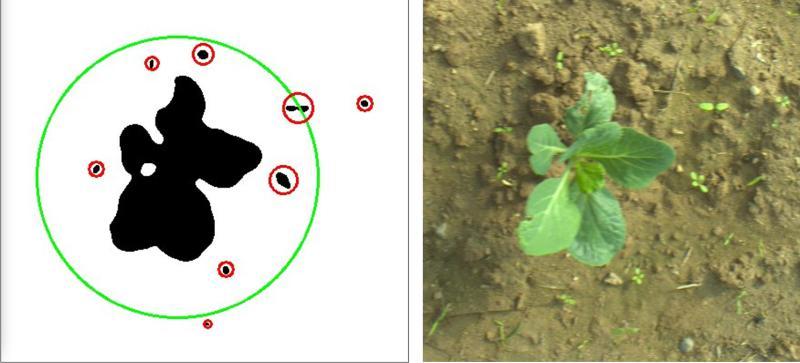

Vision Embedded System for Crop and Weed Recognition

Problem: Distinguishing crop from weed in real-time on agricultural machinery requires efficient onboard intelligence.

Method: Embedded computer-vision system using deep neural networks optimized for constrained edge hardware.

Result: Practical field-ready segmentation workflow to support precision agriculture operations.

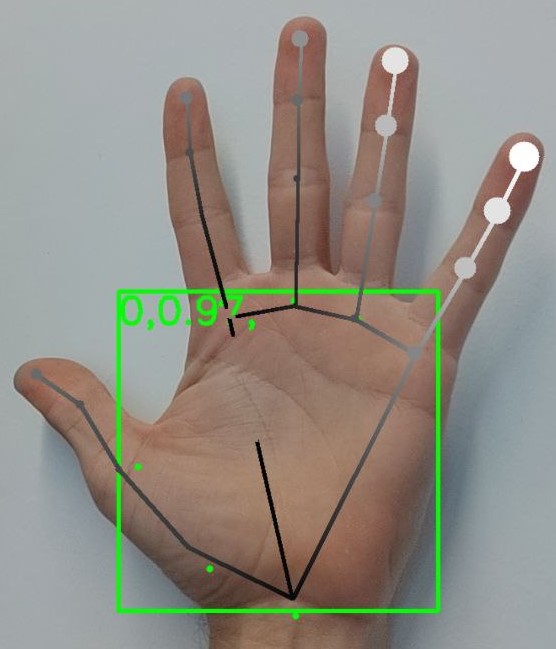

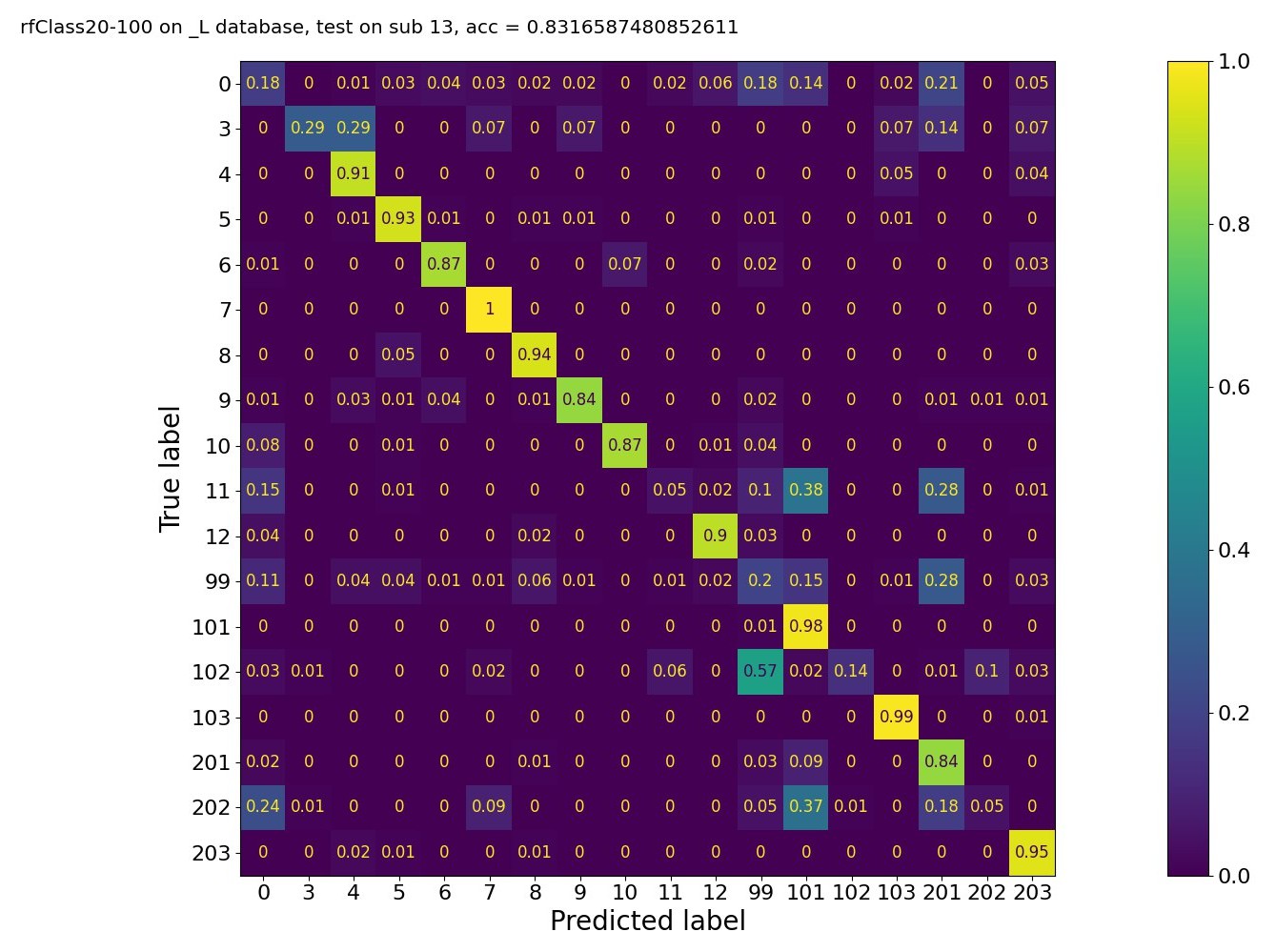

Gesture Recognition for Healthcare 4.0

Problem: Manual supervision of surgical handwashing is inconsistent and can increase infection risk.

Method: Vision-based hand and gesture recognition system with machine-learning analysis for procedure monitoring.

Result: Automated and objective assessment pipeline for handwashing compliance in clinical workflows.

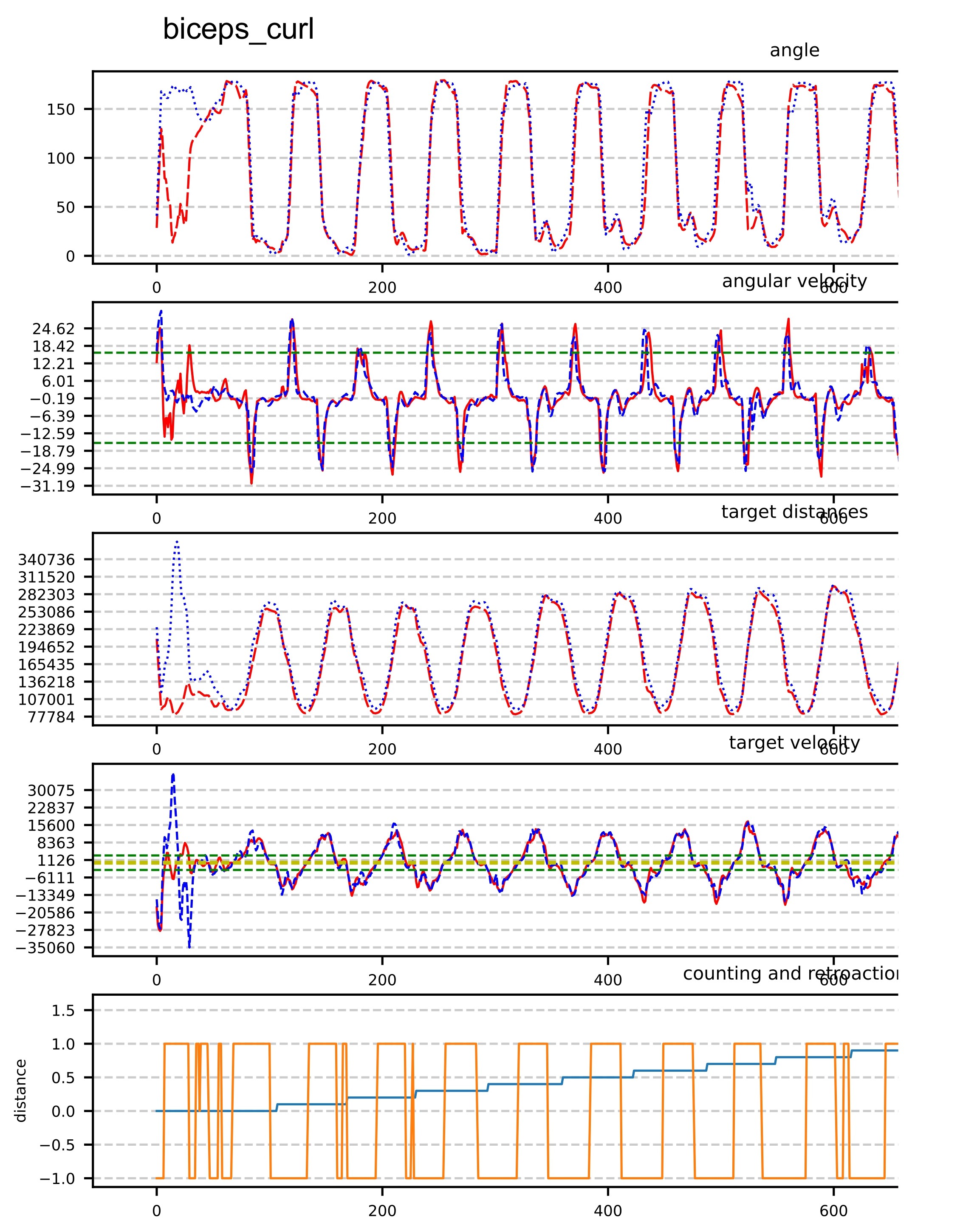

Vision System for Body and Gym Gesture Recognition

Problem: Reliable automatic recognition of exercise gestures is challenging in practical gym environments.

Method: Vision-based pose estimation and signal-processing pipeline for movement analysis and repetition evaluation.

Result: Functional smart-mirror prototype supporting real-time feedback for fitness training tasks.